We believe that a norm-shift towards closed feedback loops is possible. Sign boards asking customers to rate airport services fill Dulles’ walls. Tweeting a restaurant’s incorrect take-out order will get you a free meal. Incentives propel feedback loops in the private sector- you get a real and immediate benefit to making your voice heard by providing a company with valuable information. But how can we determine when and where this is happening among philanthropy, aid, and development organizations?

We at Feedback Labs, just like countless other organizations in our sector, are interested in the perennial “impact measurement question”: how do we know if we are making a difference? For organizations like ours, this question can get even thornier, as we have less of a straightforward thing to count. We decided in our initial attempts at defining a metric of interest to focus on the volume of organizations who are making feedback a priority, as defined through several criteria. In a recent LabStorm, Marc Maxmeister of Keystone Accountability discussed a tool he built to begin helping FBL explore the universe of organizations who may be interested in feedback, the ones who are already collecting and using feedback, and the subset of those whom FBL is already influencing. This allowed us to begin establishing a baseline from which to measure our progress moving forward.

The nitty gritty

First, Marc developed a technique to determine if a nonprofit organization is interested in feedback. By using Scrapy, an online framework that “scrapes” or extracts website data, we can automatically scan those sites (pulled from a registry of dot org websites) for relevant patterns. Unsurprisingly, dot orgs that are commercial enterprises – like craigslist.org – rely heavily on consumer feedback. Therefore, we filtered for traditional NGO language – “mission” and “how we work” – to determine which, of over 15,000 scanned websites, were likely mission-driven organizations or NGOs as we would typically think of them.

Now that we had a list of organizations that we could reasonably believe were mission-driven organizations, we wanted to know which of those were at least talking about feedback. To do so, we searched those websites for any hit on words specific to feedback loops: ‘feedback’, ‘closing the loop’, ‘shared insight’, and ‘constituent voice’, to name a few.

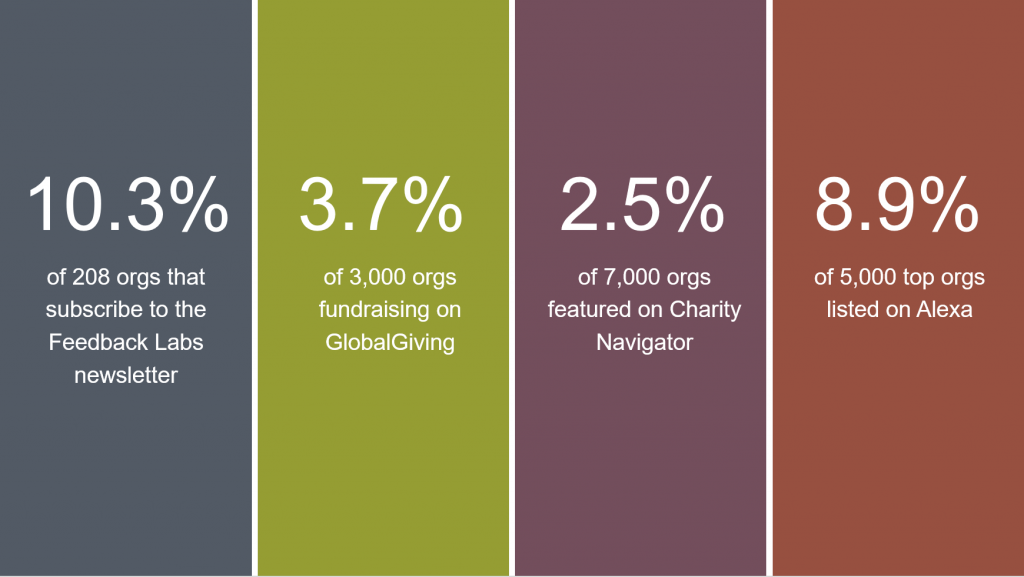

Here’s what we found (all stats from 2016) about who is using feedback loop language on their websites:

**Alexa ranks all websites by traffic and publishes the top 1 million for download. Of these, 50,000 are dot org sites

We might expect that organizations within the immediate Feedback Labs orbit, such as those that subscribe to our newsletter, might be using feedback language more often than the average nonprofit organization. However, we see that the demonstrable effect of the FBL influence on the language that they use to describe their work is small. This gives us a idea of where our impact baseline might be, and in what areas we might have the biggest impact.

It should be noted that we shouldn’t compare across categories, because this is not a benchmarking number. Each category listed has different goals with regards to feedback use for the organizations they represent or rate. Rather, the value in this tool could be in focusing on the change over time within categories.

While we are not comparing across categories as a matter of assessing a comparable metric, we can hypothesize about why the Alexa orgs, which can be thought of as the top 5000 mission-driven organizations with the most traffic, had a higher incidence of use of feedback language than some of our other categories- notably, organizations that fundraise on GlobalGiving, or Charity Navigator rated organizations. This might be because these Alexa-listed orgs are better at communicating because concerned with cultivating a customer base (meaning they have something to sell or a product that otherwise competes in the market). Further analysis could shed more light at the characteristic differences among these groups, which could point us in interesting directions as we (Feedback Labs) zero in on our target audience and further define baseline metrics.

So what’s next?

This system makes it easy to collect data about organizations within (or that we want to be in) our sphere of influence. We made several assumptions in establishing this baseline:

- Organizations who talk about feedback are actually using it. We know that this is not true for 100% of organizations. It may not even be true for 50%! But we’re using the fact that they are even talking about a feedback loop as evidence that they are on the right track.

- Organizations who do use feedback actually talk about it on their website. We know that nonprofits are often under-resourced, and may not always do the best job of talking about their feedback work. Further, since the vocabulary around feedback loops is still evolving, it’s entirely possible that organizations are talking about their feedback work in ways that our scan didn’t pick up.

- We used good filters. Obtaining a .org website domain isn’t like obtaining a .gov site. There is no regulation around using .org domains (not to mention the thousands of new domain extensions that recently became available), so there’s no guarantee that .org websites are mission-driven organizations (in fact, we know that most .org sites with the highest traffic are not). We think we put good filters in place to identify mission-driven organizations, but there is still room for refining the filters.

Even given these caveats, we’re very excited by this new way of beginning to measure our impact, beginning with a reasonable baseline. Measuring impact in this way will push the feedback agenda forward as we

- Establish a baseline of where, when, and how feedback language is being used.

- Refine who our target audience is.

- Regularly measure the use of language on those sites over time.

- Narrow down searches for specific words or phrases to find different dimensions to feedback oriented work.

- Pair this data with other measures to determine the real-world implications – are these organizations walking their talk?

- Operationalize this combined data to determine the key influencers and make smarter decisions on the means of moving social norms.

By identifying the organizations we want to – and realistically can – influence, we are better positioned to establish a norm of “closing the loop”- and knowing how close we are to doing so. We look forward to continuing to iterate on this baseline metric, and welcome your thoughts and experiences with impact measurement in your own work.

Many thanks to the team at Charity Navigator, who shared data with us as we begin developing this baseline metric. We applaud their willingness to collaborate with us and look forward to continuing to explore how we might define and develop tools to accurate and usefully measure our work.

Interested in the metrics discussed in this post? Keystone Accountability can provide this approach to any advocacy organization. It works best when you are trying to track use of specific phrases and jargon across the whole sector or with a known list of websites. Feel free to reach out to Marc at [email protected].

LabStorms are collaborative brainstorm sessions designed to help an organization wrestle with a challenge related to feedback loops, with the goal of providing actionable suggestions. LabStorms are facilitated by FBL members and friends who have a prototype, project idea, or ongoing experiment on which they would like feedback. Here, we provide report-outs from LabStorms. If you would like to participate in an upcoming LabStorm (either in person or by videoconference), please drop Sarah at note at [email protected].